Futility A New Antidote Against Response Fraud

Summary

ModifyWiki article

Compare versions Edit![]()

by Hubertus Hofkirchner -- Vienna, 20 Nov 2015

Almost inadvertently, we recently discovered a surprising property of our second generation prediction market platform. Prediction markets were originally developed to increase forecast accuracy and reliability, and one fundamental innovation is how participants are incentivised: traditional surveys pay panellists for their time, whereas we reward participants exclusively for making good predictions. Turns out, this new principle also works as a highly effective deterrent against response fraud.

Anti-Fraud Mechanics

Prediki’s incentive algorithms are essentially designed to elicit participants’ true beliefs. This incentive compatibility requires a true real-time trading engine, other than some older prediction markets platforms with delayed execution or worse, where respondents just pretend to buy or sell. An answer’s market price therefore rises in the same millisecond as a participant does a trade. The correct strategy for a trader to maximise his incentive money in this fast-paced trading game is to buy an answer just as long as he believes it to be more likely than its current price. In other words, identical to the trader’s level of subjective belief in it.

Enter Futility

Logically, the trader will now stop trading because he must expect a loss from any further transaction. If he bought he’d buy too expensive, if he sold he’d sell too cheap. The same is true even if the trader engages in response fraud by participating twice. The fake him would face the same subjectively correct price as the real him. In stark contrast to a traditional survey, the respondent can expect no additional profit.

This logic is identical when prices fluctuate because on Prediki, the trader can always do more transactions under his true identity, until market prices re-adjust to where he believes they should be.

Empirical Evidence

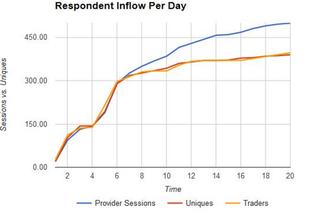

We can now actually demonstrate these mechanics at work. Prediki respondents often must complete a short traditional questionnaire for profiling and screening before they get access to the actual prediction market. The chart below shows sample arrival for such a method-mix project, where a significant number of fraudsters participated in a traditional survey with a target sample size of n=500.

Of course, panel supplier rules and technology are designed to ensure that a respondent cannot participate twice in the same survey. In this particular project, the supplier ran out of qualified respondents and had to call on a panel partner to get to the required number.

The Gotcha Moment

Now, look at the sixth day of the project when a very strange discrepancy commenced. The number of sessions by "completes" arriving from the traditional survey part (blue line) and the number of traders entering the market (yellow line) started to follow a distinctly different path. Despite adding raw sample, the number of new traders stayed rather flat. Suspicions raised, we used a technical trick to find out the number of real respondents, and voilá: we found out that the proportion of unique, true humans arriving (red line) and traders entering had remained perfectly stable.

What happened? We concluded that many respondents had signed up both with our direct panel supplier and his later panel partner, undermining their individual duplicate checks. The fraudsters completed the traditional survey twice to grab the fixed incentive. However, they understood very well the futility of an attempt to defraud the prediction market's reward money in this manner. Their criminal energy simply evaporated in the absence of a financial incentive to cheat.

Happy End

The traditional part of the method-mix project only served for screening purposes, so a modicum of fraudsters getting past the sample providers’ first line of defence was of little consequence. The intrinsic deterrent of the prediction market successfully immunised the actual project against this fraud. The results for our client were produced with true, unique respondents and came out good, both valid and accurate.

Hubertus Hofkirchner is CEO of Prediki Prediction Services and a confessed prediction market evangelist. He also enjoys playing detective, once in a while.

The Response Fraud mini-series:

Part 1: Response Fraud in Market Research: How Bad Is It?

Part 2: How To Detect Response Fraudsters

Part 3: Futility: A New Antidote Against Response Fraud

Follow me to stay tuned.

Read on:

- Swiss Democracy Needs to Catch Up to Internet Age

- Tapping Crowd Intelligence For Advert Efficiency Testing

- Liar, Liar: Who still believes in surveys?

Versions